University of Texas at Austin computer scientists have taught an AI agent to infer its environment based on just a few glimpses around. The new skill, previously believed to be restricted to humans, is an important step towards the development of effective search and rescue robots to aid rescuers in high-risk missions.

One of the most difficult problems to overcome when it comes to robot orientation is getting them to efficiently assess a new environment: while existing technology allows robots to perform simple object identification and retrieval tasks in an environment they have experienced before, robots simply could not gather and process visual information quickly enough to effectively navigate a new environment.

Deep Learning To Help Robots Make Sense Of Visual Cues

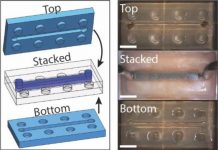

The University of Texas team used deep learning to train their AI agent on thousands of 360-degree images of various types of environments.

The goal – developing a more autonomous agent: “We want an agent that’s generally equipped to enter environments and be ready for new perception tasks as they arise”, says lead researched professor Kristen Grauman. “It behaves in a way that’s versatile and able to succeed at different tasks because it has learned useful patterns about the visual world.”

They way the AI processes visual cues is similar to that of a human using existing information to make sense of the immediate environment.

“Just as you bring in prior information about the regularities that exist in previously experienced environments — like all the grocery stores you have ever been to — this agent searches in a nonexhaustive way,” Grauman said. “It learns to make intelligent guesses about where to gather visual information to succeed in perception tasks.”

Further Developments: Mobile Robots

For now, the AI agent is static – it has the ability to point a camera in any direction to gather info on the environment, but it cannot move. The research team have announced that their next focus will be the development of a fully mobile robot, thus contributing to the development of AI technology with exciting applications in real-life rescue situations.

This research was partly funded by the U.S. Defense Advanced Research Projects Agency, the U.S. Air Force Office of Scientific Research, IBM Corp. and Sony Corp. The research team is led by professor Kristen Grauman, Ph.D. candidate Santhosh Ramakrishnan and former Ph.D. candidate Dinesh Jayaraman (now at the University of California, Berkeley), and the results were published in the journal Science Robotics.